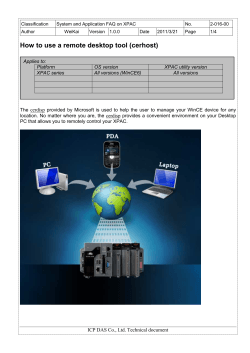

LMD Martian Mesoscale Model [LMD-MMM] User Manual